edge matter and where they are

Very small, prefabricated data centers are starting to compete against colocation facilities, cloud service providers, and even on-premises IT deployments. Edge computing could either redistribute the enterprise market or scramble it all up.

By Scott Fulton III

EXECUTIVE SUMMARY

A digital network is a transportation system for information. In this network, an "edge" is comprised of servers extended as far out as possible, to reduce the time it takes for users to be expediently served. There are two possible strategies at work here:

- Data streams, audio, and video may be received faster and with fewer pauses (preferably none at all) when servers are separated from their users by a minimum of intermediate routing points, or "hops." Content delivery networks (CDN) such as Akamai and Cloudflare are built around this strategy.

- Applications may be expedited when their processors are stationed closer to where the data is collected. This is especially true for applications for logistics and large-scale manufacturing, as well as for the Internet of Things (IoT) where sensors or data-collecting devices are numerous and highly distributed.

In the context of edge computing, the edge is the location on the planet where processors may deliver functionality to customers most expediently. Depending on the application, when one strategy or the other is employed, these processors may end up on one end of the network or the other. Because the Internet isn't built like the old telephone network, "closer" in terms of routing expediency is not necessarily closer in geographical distance. And depending upon how many different types of service providers your organization has contracted with -- public cloud applications providers (SaaS), apps platform providers (PaaS), leased infrastructure providers (IaaS), content delivery networks -- there may be multiple tracts of IT real estate vying to be "the edge" at any one time.

The future of both the communications and computing markets may depend on how those points on the geographical map, and the points on the network map, are finally interfaced. Where those points reside, especially as 5G Wireless networks are being built, may end up determining who gets to control them, and who gets to regulate them.

THE CURRENT TOPOLOGY OF ENTERPRISE NETWORKS

There are three places most enterprises tend to deploy and manage their applications and services:

- On-premises, where data centers house multiple racks of servers, where they're equipped with the resources needed to power and cool them, and where there's dedicated connectivity to outside resources

- Colocation facilities, where customer equipment is hosted in a fully managed building where power, cooling, and connectivity are provided as services

- Cloud service providers, where customer infrastructure may be virtualized to some extent, and services and applications are provided on a per-use basis, enabling operations to be accounted for as operational expenses rather than capital expenditures

The architects of edge computing would seek to add their design as a fourth category to this list: one that leverages the portability of micro data centers (µDC) and small form-factor servers to reduce the distances between the processing point and the consumption point of functionality in the network. If their plans pan out, they seek to accomplish the following:

POTENTIAL BENEFITS

- Minimal latency. The problem with cloud computing services today is that they're slow, especially for artificial intelligence-enabled workloads. This essentially disqualifies the cloud for serious use in deterministic applications, such as real-time securities markets forecasting, autonomous vehicle piloting, and transportation traffic routing. Processors stationed in small data centers closer to where their processes will be used could open up new markets for computing services that cloud providers haven't been able to address up to now.

- Simplified maintenance. For an enterprise that doesn't have much trouble dispatching a fleet of trucks or maintenance vehicles to field locations, micro data centers (µDC) are designed for maximum accessibility, modularity, and a reasonable degree of portability. They're compact enclosures, some small enough to fit in the back of a pickup truck, that can support just enough servers for hosting time-critical functions, that can be deployed closer to their users. Conceivably, for a building that presently houses, powers, and cools its data center assets in its basement, replacing that entire operation with three or four µDCs somewhere in the parking lot could be an improvement.

- Cheaper cooling. For large data center complexes, the monthly cost of electricity used in cooling can easily exceed the cost of electricity used in processing. The ratio between the two is called power usage effectiveness (PUE). At times, this has been the baseline measure of data center efficiency (although in recent years, surveys have shown fewer IT operators know what this ratio means). Theoretically, it may cost a business less to cool and condition several smaller data center spaces than it does one big one. Plus, due to the peculiar ways in which some electricity service areas handle billing, the cost per kilowatt may go down across the board for the same server racks hosted in several small facilities rather than one big one. A recent white paper published by Schneider Electric [PDF] assessed all the major and minor costs associated with building traditional and micro data centers. While an enterprise could incur just under $7 million in capital expenses for building a traditional 1 MW facility, it would spend just over $4 million to facilitate 200 5 KW facilities.

There's also a certain ecological appeal to the idea of distributing computing power to customers across a broader geographical area, as opposed to centralizing that power in mammoth, hyperscale facilities, and relying upon high-bandwidth fiber-optic links for connectivity.

Edge computing has been touted as one of the lucrative, new markets made feasible by 5G Wireless technology. For the global transition from 4G to 5G to be economically feasible for many telecommunications companies, the new generation must open up new, exploitable revenue channels. 5G requires a vast, new network of (ironically) wired, fiber optic connections to supply transmitters and base stations with instantaneous access to digital data (the backhaul). As a result, an opportunity arises for a new class of computing service providers to deploy multiple µDCs adjacent to radio access network (RAN) towers, perhaps next to, or sharing the same building with, telco base stations. These data centers could collectively offer cloud computing services to select customers at rates competitive with and features comparable to, hyperscale cloud providers such as Amazon, Microsoft Azure, and Google Cloud Platform.

Ideally, perhaps after a decade or so of evolution, edge computing would bring fast services to customers as close as their nearest wireless base stations. We'd need massive fiber optic pipes to supply the necessary backhaul, but the revenue from edge computing services could conceivably fund their construction, enabling it to pay for itself.

If all this sounds like too complex a system to be feasible, keep in mind that in its present form, the public cloud computing model may not be sustainable long-term. That model would have subscribers continue to push applications, data streams, and content streams through pipes linked with hyperscale complexes whose service areas encompass entire states, provinces, and countries -- a system that wireless voice providers would never dare have attempted.

POTENTIAL PITFALLS

Nevertheless, a computing world entirely remade in the edge computing model is about as fantastic -- and as remote -- as a transportation world that's weaned itself entirely from petroleum fuels. In the near term, the edge computing model faces some significant obstacles, several of which will not be altogether easy to overcome:

- Remote availability of three-phase power. Servers capable of providing cloud-like remote services to commercial customers, regardless of where they're located, need high-power processors and in-memory data, to enable multi-tenancy. Probably without exception, they'll require access to high-voltage, three-phase electricity. That's extremely difficult, if not impossible, to attain in relatively remote, rural locations. (Ordinary 120V AC current is single-phase.) Telco base stations have never required this level of power up to now, and if they're never intended to be leveraged for multi-tenant commercial use, then they may never need three-phase power anyway. The only reason to retrofit the power system will be if edge computing is viable. But for widely distributed Internet-of-Things applications such as Mississippi's trials of remote heart monitors, a lack of sufficient power infrastructure could end up once again dividing the "have's" from the "have-not's."

- Carving servers into protected virtual slices. For the 5G transition to be affordable, telcos must reap additional revenue from edge computing. What made the idea of tying edge computing evolution to 5G was the notion that commercial and operational functions could co-exist on the same servers -- a concept introduced by Central Office Re-imagined as a Datacenter (CORD), one form of which is now considered a key facilitator of 5G Wireless. The trouble is, it may not even be legal for operations fundamental to the telecommunications network to co-reside with customer functions on the same systems -- the answers depend on whether lawmakers are capable of fathoming the new definition of "systems." Until that day (if it ever comes), 3GPP (the industry organization governing 5G standards) has adopted a concept called network slicing, which is a way to carve telco network servers into virtual servers at a very low level, with much greater separation than in a typical virtualization environment from, say, VMware. Conceivably, a customer-facing network slice could be deployed at the telco networks' edge, serving a limited number of customers. However, some larger enterprises would instead take charge of their network slices, even if that means deploying them in their own facilities -- moving the edge onto their premises -- than invest in a new system whose value proposition is based largely on hope.

- Telcos are defending their home territories from local breakouts. If the 5G radio access network (RAN), and the fiber optic cables linked to it, are to be leveraged for commercial customer services, some gateway has to be in place to siphon off private customer traffic from telco traffic. The architecture for such a gateway already exists [PDF], and has been formally adopted by 3GPP. It's called local breakout, and it's also part of the ETSI standards body's official declaration of multi-access edge computing (MEC). So technically, this problem has been solved. The trouble is, certain telcos may have an interest in preventing the diversion of customer traffic away from the course it would normally take: into their own data centers. Today's Internet network topology has three tiers: Tier-1 service providers peer only with one another, whereas Tier-2 ISPs are typically customer-facing. The third tier allows for smaller, regional ISPs on a more local level. Edge computing on a global scale could become the catalyst for public cloud-style services, offered by ISPs on a local level, perhaps through a kind of "chain store." But that's assuming the telcos, who manage Tier-2, are willing to just let incoming network traffic be broken out into a third-tier, enabling competition in a market they could very easily claim for themselves.

If location, location, location matters again to the enterprise, then the entire enterprise computing market can be turned on its ear. The hyperscale, centralized, power-hungry nature of cloud data centers may end up working against them, as smaller, more nimble, more cost-effective operating models spring up -- like dandelions, if all goes as planned -- in more broadly distributed locations.

"I believe the interest in edge deployments," remarked Kurt Marko, principal of technology analysis firm Marko Insights, in a note to ZDNet, "is primarily driven by the need to process massive amounts of data generated by 'smart' devices, sensors, and users -- particularly mobile/wireless users. Indeed, the data rates and throughput of 5G networks, along with the escalating data usage of customers will require mobile base stations to become mini data centers."

THE BLURRY BORDERLINES OF THE EDGE

Edge computing is an effort to bring the quality of service (QoS) back into the discussion of data center architecture and services, as enterprises decide not just who will provide their services, but also where.

Since the 1960s, the people who have made a living thinking about business engineering have talked about the concept of "business process alignment" -- about getting the business operations leaders and the IT operations leaders together on the same page. For over a half-century, it's been mostly talk. Business operations teams, led by the COO, make strategic decisions that technology teams led merely by the CTO, must follow. IT is on the tail end of that discussion. They're tasked with not only overhauling their entire infrastructure -- with "digital transformation" -- but with reinventing the business. But they often don't have the authority to replace the equipment or the data center assets they presently own.

The cloud hasn't always been an option for some of them -- outsourcing some portion of their applications or their infrastructure to a third party. Suddenly, along comes the edge.

"The operations [people] are usually the tail end of the process, and most often, people don't even consult with the operations folks," explained Geetha Ram, who heads business development for HPE's edge computing line. "The operations are the ones who are given an idea from the business and the technology teams, they get the tail end of it, and they're told, 'Yea, here, go ahead and support this, and make it happen.'

"Edge computing is kind of a different beast for them," Ram continued, speaking to an audience at the 2019 Brooklyn 5G Summit. "From a scale perspective, it is huge, enormous compared to the data center. And, coupled with the fact that the edge locations do not have the skilled IT folks, how do we manage this? How do we deploy hundreds of thousands of these locations? That's the challenge for operations people."

THE 'OPERATIONS TECHNOLOGY' THEORY

Generally speaking, "the edge" is a theoretical space where a computing resource may be accessed in the minimum amount of time. In another era, that place was the desktop. But to enable that scenario, that resource had to be installed on every desktop, which meant it had to be maintained on every desktop. For a multi-national corporation, this quickly becomes impractical.

In a later era, the solution was the virtual desktop -- an image maintained in the data center, and later in the cloud, where applications were installed in a pre-conditioned, anesthetic virtual machine. But maintaining the pristine incorruptibility of the VDI image also, in due course, became impractical.

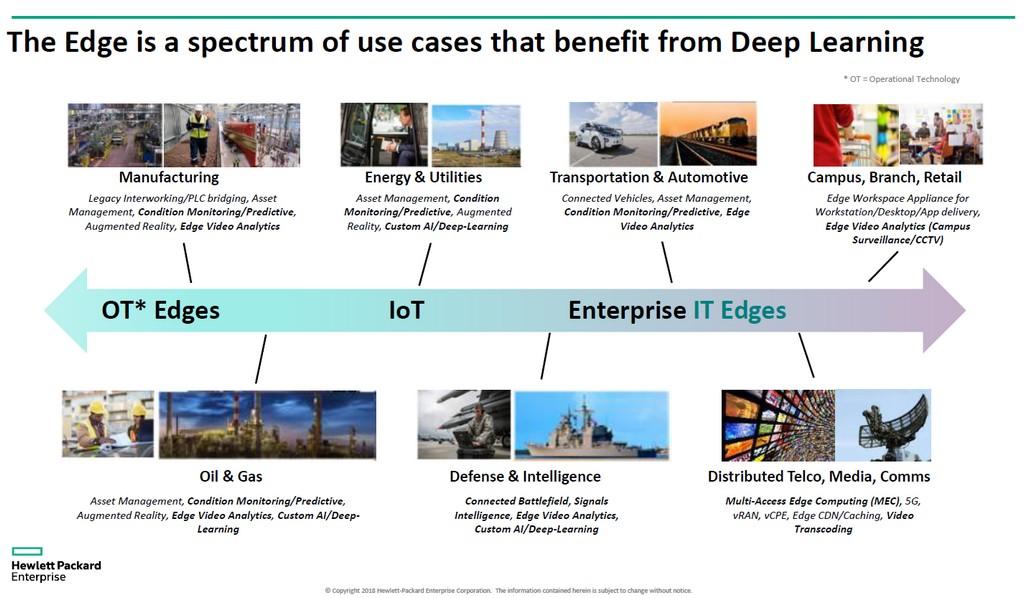

HPE's Ram believes that the next giant leap in operations infrastructure will be coordinated and led by staff and contractors who may not have much, if any, personal investment or training in hardware and infrastructure -- people who, up to now, have been largely tasked with maintenance, upkeep, and software support. Her company calls this class of personnel operational technology (OT). Unlike those who perceive IT and operations converging in one form or the other of "DevOps," HPE perceives three classes of edge computing customers. Not only will each of these classes, in its view, maintain its own edge computing platform, but the geography of these platforms will separate from one another, not converge, as this HPE diagram depicts.

Here, there are three distinct classes of customers, each of which HPE has apportioned its own segment of the edge at large. The OT class here refers to customers more likely to assign managers to edge computing who have less direct experience with IT, mainly because their main products are not information or communications itself. That class is apportioned an "OT edge." When an enterprise has more of a direct investment in information as an industry or is largely dependent upon information as a component of its business, HPE attributes to it an "IT edge." In-between, for those businesses that are geographically dispersed and dependent upon logistics (where the information has a more logical component) and thus the Internet of Things, HPE gives it an "IoT edge."

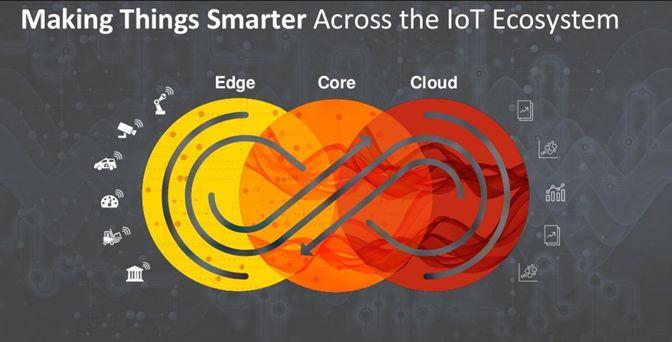

DELL'S THREE-PART VISION OF THE NETWORK

In 2017, Dell Technologies offered a three-tier topology for the computing market at large, dividing it into "core," "cloud," and "edge." As this slide from an early Dell presentation indicates, this division seemed radically simple, at least at first: Any customer's IT assets could be divided, respectively, into 1) what it owns and maintains with its own staff; 2) what it delegates to a service provider and hires it to maintain; and 3) what it distributes beyond its home facilities into the field, to be maintained by operations professionals (who may or may not be outsourced).

Since that time, however, Dell Technologies has leaned more and more on the interplay between divisions implied by its original diagram. In a November 2018 presentation for the Linux Foundation's Embedded Linux Conference Europe, CTO for IoT and Edge Computing Jason Shepherd made this simple case: As many networked devices and appliances are being planned for IoT, it will be technologically impossible to centralize their management, including if we enlist the public cloud.

"My wife and I have three cats," Shepherd told his audience. "We got larger storage capacities on our phones so that we could send cat videos back and forth.

"Cat videos explain the need for edge computing," he continued. "If I post one of my videos online, and it starts to get hits, I have to cache it on more servers, way back in the cloud. If it goes viral, then I have to move that content as close to the subscribers that I can get it to. As a telco, or as Netflix or whatever, the closest I can get is at the cloud edge -- at the bottom of my cell towers, these key points on the Internet. This is the concept of MEC, Multi-access Edge Computing -- bringing content closer to subscribers. Well now, if I have billions of connected cat callers out there, I've completely flipped the paradigm, and instead of things trying to pull down, I've got all these devices trying to push up. That makes you have to push the compute even further down."

THE TIERED NETWORK

As the very document you're reading now demonstrates, the world's computing services today are networked. ZDNet is one example of a service whose publishers have invested in servers stationed in strategic locations that make an effort to deliver you this document (and the multimedia content within it) in minimum time. From the perspective of the content delivery network (CDN) that accomplishes this, our servers are parked at the edge.

The providers of other services that we might otherwise lump together onto the already oversized pile called "the cloud" are each searching for their own edge. Data storage providers, cloud-native applications hosts, Internet of Things (IoT) service providers, server manufacturers, real estate investment trusts (REIT), and pre-assembled server enclosure manufacturers, are all paving express routes between their customers and what promises, for each of them, to be the edge.

What they're all looking for is a competitive advantage. The idea of an edge shines new hope on the prospects of premium service -- a solid, justifiable reason for certain classes of service to command higher rates than others. If you've read or heard elsewhere that the edge could eventually subsume the whole cloud, you may understand how this wouldn't make much sense. If everything were premium, nothing would be premium.

"Edge computing is apparently going to be the perfect technology solution, and venture capitalists say it's going to be a multi-billion-dollar tech market," remarked Kevin Brown, CTO and senior vice president for innovation for data center service equipment provider, and micro data center chassis manufacturer, Schneider Electric. "Nobody actually knows what it is."

Brown acknowledged that edge computing might attribute its history to the pioneering CDNs, such as Akamai. Still, he went on, "you've got all these different layers -- HPE has their version, Cisco has theirs. . . We couldn't make sense of any of that. Our view of the edge is really taking a very simplified view. In the future, there's going to be three types of data centers in the world, that you really have to worry about."

IS THE EDGE AT 'TIER-3?'

The picture Brown drew, during a press event at the company's Massachusetts headquarters last February, is a re-emerging view of a three-tiered Internet and is shared by a growing number of technology firms. In the traditional two-tiered model, Tier-1 nodes are restricted to peering with other Tier-1 nodes, while Tier-2 nodes handle data distribution on a regional level. Since the Internet's beginning, there has been a designation for Tier-3 -- for access at a much more local level. (Contrast this against the cellular Radio Access Network scheme, whose distribution of traffic is single-tiered.)

"The first point that you're connecting into the network, is really what we consider the local edge," explained Schneider Electric's Brown. Mapped onto today's technology, he went on, you might find one of today's edge computing facilities in any server shoved into a makeshift rack in a wiring closet.

"For our purposes," he went on, "we think that's where the action is."

VAPOR IO BUILDS A MESH BETWEEN MICRO DATA CENTERS

"The edge is not a technology land grab," remarked Cole Crawford, CEO of µDC producer Vapor IO. "It is a physical, real estate land grab."

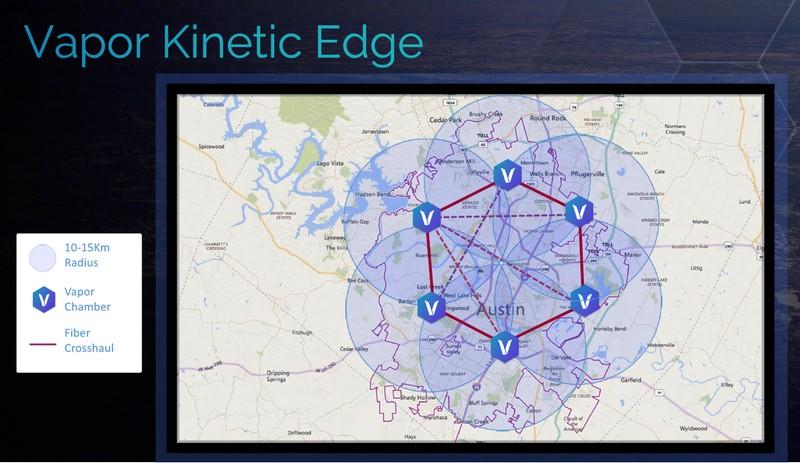

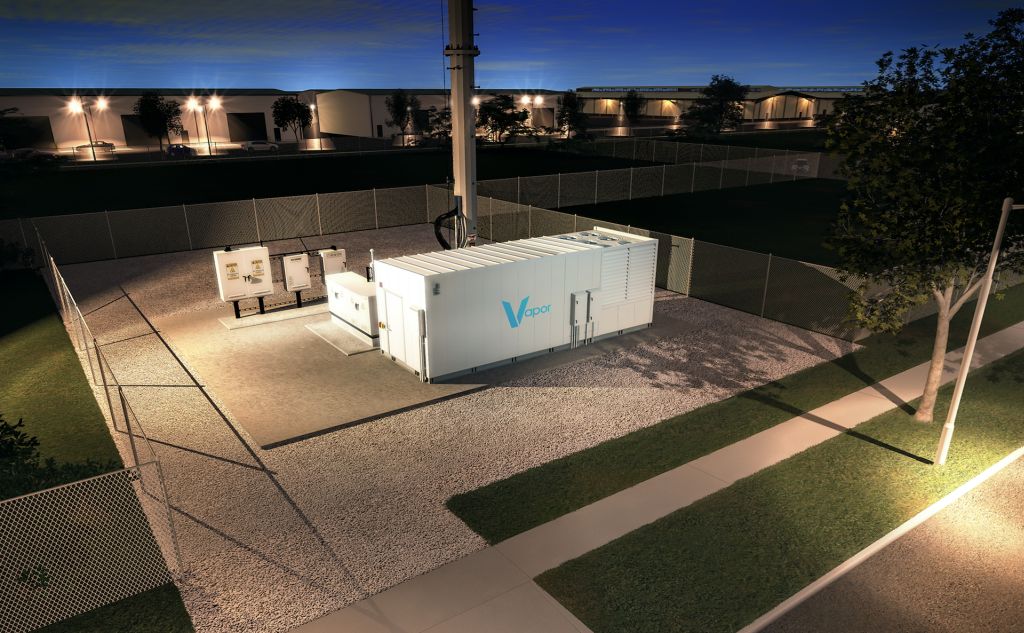

As ZDNet Scale reported in November 2017, Vapor IO makes a 9-foot diameter circular enclosure it calls the Vapor Chamber. It's designed to provide all the electrical facilities, cooling, ventilation, and stability that a very compact set of servers may require. It aims to enable same-day deployment of compute capability almost anywhere in the world, including temporary venues and, in the most lucrative use case of all, alongside 5G wireless transmission towers.

Since that report, public trials have begun of Vapor Chamber deployments in real-world edge/5G scenarios. The company calls this initial, experimental deployment schematic Kinetic Edge. Through its agreements with cellular tower owners including Crown Castle (which became an investor in Vapor IO last September), this schematic has Vapor IO stationing shipping container-like modules with cooling components attached, to strategic locations across a metro area.

By stationing edge modules adjacent to existing cellular transmitters, Vapor IO leverages their existing fiber optic cable links to communicate with one another at minimum latency, at distances no greater than 20 km. Each module accommodates 44 server rack units (RU) and up to 150 kilowatts of server power, so a cluster of six fiber-linked modules would host 0.9 megawatts. While that's still less than 2 percent of the server power of a typical metropolitan colocation facility, from a colo leader such as Equinix or Digital Realty, consider how competitive such a scheme could become if Crown Castle were to install one Kinetic Edge module beside each of its more than 40,000 cell towers in North America. Theoretically, the capacity already exists to facilitate the computing power of greater than 700 metro colos.

"As you start building out this Kinetic Edge, through the combination of our software, fiber, the real estate we have access to, and the edge modules that we're deploying," said Vapor IO's Crawford, "we go from the resilience profile that would exist in a Tier-1 data center, to well beyond Tier-4. When you are deploying massive amounts of geographically disaggregated and distributed physical environments, all physically connected by fiber, you now have this highly resilient, physical world that can be treated like a highly connected, logical, single world."

AT&T DRAWS THE COMPUTE EDGE FURTHER BACK

Vapor IO has perhaps done more to popularize the notion of cell tower-based data centers than any other firm, particularly by spearheading the establishment of the Kinetic Edge Alliance. But perhaps seeing a startup seize a key stronghold from its grasp, AT&T has recently backed away from characterizing its network edge as a place within sight of civilian eyes. In a recent demonstration at its AT&T Foundry facilities in Plano, Texas, it showed how 5G connectivity could be leveraged to run a real-time, unmanned drone tracking application. The customer's application, in this case, was not deployed in a µDC, but instead in a data center that, at some later date, may be replaced with one of its own, existing Network Technology Centers (NTC).

It's AT&T's latest bid to capture the edge for itself and hold it closer to its own treasure chest. In response, Vapor IO has found itself tweaking its customer message.

A Vapor IO Kinetic Edge micro data center operating alongside a cellular tower.

"When we first started describing our Kinetic Edge platform for edge computing, we often used the image of a data center at the base of a cell tower to make it simple to understand," stated Matt Trifiro, Vapor IO's chief marketing officer, in a note to this reporter. "This was an oversimplification."

"We evaluate dozens of attributes," Trifiro continued, "including the availability of multi-substation power, proximity to population centers, and the availability of existing network infrastructure, when selecting Kinetic Edge locations. While many of our edge data centers do, in fact, have cell towers on the same property, they mainly serve as aggregation hubs that connect to many macro towers, small cells, and cable headends."

AT&T's demonstration, Trifiro maintained, limited itself to a two-tier Internet topology. Meanwhile, 5G network engineers (arguably enlisting the help of AT&T engineers themselves) have already worked out a method called serving gateway local breakout (SGW-LBO). Instead of directing incoming RAN traffic to the NTC and sorting it all out there, this technique can filter out customer-specific traffic, and direct that traffic to its edge computing systems.

Athonet is one communications equipment provider that has already rolled out SGW-LBO to some telco customers, after its launch in February 2018. In a statement at the time, the company said, "The benefit of this approach is that it allows specific traffic (not all traffic) to be offloaded for key applications that are implemented at the network edge without impacting the existing network or breaking network security. . . We, therefore, believe that it is the optimal enabler for MEC."

"Are cell towers a factor in our site selection? Yes, absolutely, but not the factor," asserted Vapor IO's Trifiro. "Because all of our sites are tower-connected, we can employ a software-defined network that meshes together our sites with local tower assets, making it possible to route traffic to and from thousands of macro and small cells in a region. In this way, we enable the Kinetic Edge to span the entire middle-mile of a metropolitan area, connecting the cellular access networks to the regional data centers and the Tier-1 backbones using modern network topology."

WILL AN EDGE DATA CENTER BE CERTIFIABLE?

The data center industry has its own tiered classification system for official certification. It's different from the Internet tiers, in that the data center model starts small at Tier-1 and works up. In varying degrees of fault tolerance, at Tier-4, it only permits less than one half-hour of fault tolerance per year. Even Tier-3 certification requires a facility to have dual utility feeds, and permit for concurrent maintenance -- enabling components to be repaired or replaced while still operating. For a data center like the Kinetic Edge model to be treated seriously by the industry at large, someone may need to decide where it rests on this scale. And for now, Schneider Electric's Kevin Brown isn't certain where that will be.

"We are not going to see Tier-3 design methodologies applied at this local edge," Brown remarked. "What we are saying is, this local edge needs to become more resilient. And we don't know exactly what that's going to look like from a hardware standpoint. We may not know exactly what it entails, and we have no 'tier' classification standards that apply. But they have to become better than what they were in the past."

If the MEC model comes into full fruition, at the bases of cell towers or the bottoms of broom closets, it could revise and completely transform the Internet's Tier-3, while at the same time creating a data center classification "Tier-5."

Distinguishing edge devices from edge components

Both Dell and HPE have successfully marketed unique form factors for their otherwise mainline servers, as "edge devices" or "edge computing devices." But they're based on somewhat different strategies from one another, each following its manufacturer's distinct vision of what an edge computing customer requires.

AN EDGE DEVICE'S PLACE IN A MICRO DATA CENTER

HPE Edgeline EL8000

Last February, HPE premiered its Edgeline EL8000 series servers, which its marketing describes as "converged edge systems." The first thing you notice about it is its unusual form factor: It's not at all the usual rack width, designed to be deployed by people who have more experience installing devices than debugging systems.

"The idea," explained HPE's Geetha Ram, "is to make sure that the platform can not only be managed remotely by non-skilled IT folks, but also make it very, very simple. Zero-touch management and deployment. . . all the way up to the applications. Drag-and-drop the OS, Docker, Kubernetes, Azure Stack, video analytics, AI."

The unspoken part of Ram's assertion here is that edge servers and edge devices would not only be managed by personnel outside of IT, but budgeted and accounted for by departments outside of IT. HPE is marketing edge components as appliances or devices to departments that have been charged with managing field operations as well as cloud-based services, because both have generally been considered "outside," or non-internal.

Dell's Jason Shepherd warns that an operations-based approach to edge deployments may lead to unjustified costs, especially when an organization adopts the mindset that an edge system can be dropped in place and left essentially to run on its own. He calls this the "Pi-in-the-sky" approach, with the "Pi" represented by a Raspberry Pi — the miniature, single-board Linux computer most often associated with the concept of edge devices.

"In a lot of the market, because we're getting traction and because of this dynamic, we're seeing [where] you take a Raspberry Pi-class device, you hook it up to some public cloud, and you just get going," said Shepherd. "And a lot of the time, this OT person on the shop floor, they know their processes, they have their domain knowledge, they're doing 'shadow IT' completely bypassing IT, and they just get going and innovating. But they don't really think about what they're getting into. So before they know it. . . they start realizing the cost of pumping data mindlessly to some cloud, and then having to pay to get their own data back. Ouch. Then they have to re-architect."

Dell EMC Edge Gateway 5000

Ironically, it's Dell EMC that produces what it calls Edge Gateway devices, such as the Model 5000 pictured here. It could fit under an installer's arm, and is designed to be deployed in places like solar and wind turbine farms, where the generator itself is the only available power source. However, Dell emphasizes the EMC tie-in, which involves more direct connectivity with the organization's own storage networks rather than some public cloud storage blob. And it's marketed to organizations that either involve IT more in the management of edge devices and components, or that don't have a problem with teaching their OT departments IT skills.

IS THERE OVERLAP BETWEEN "EDGE COMPUTING" AND "FOG COMPUTING?"

Since 2014, Cisco has aggressively sought to inject the public discussion of distributed systems with its so-called notion of "fog computing." It's fair to say the company has been inconsistent with its definition, which is largely why in the context of any examination of a successful architecture, the interjection of fog, or "the fog," seems forced. Indeed, even though Cisco has recently taken active steps to distinguish the concepts of edge and fog from one another, experts briefed by Cisco have come away advising the press and clients that the two concepts are the same thing.

There is no truly consistent definition of fog computing, and for that reason, an organization could very easily deploy an Internet-of-Things application with field sensors and edge devices — including devices built by Cisco — and never utter the phrase. But in recent attempts, Cisco has tried to advance the idea that fog directly addresses the Pi-in-the-sky problem put forth by Dell's Shepherd: that an edge deployment that relies upon shuttling data back and forth from the cloud, works against itself. The idea, advanced in a recent article by Cisco contributor David Linthicum, is that by pulling both compute and data storage capabilities out of the cloud and toward the point of data collection — in this case, out in the field — an organization takes a piece of the cloud with it, thus fog.

"Fog and edge could create a tipping point, of sorts," writes Linthicum. "Network latency limited IoT's full evolution and maturity given the limited processing that can occur at sensors. Placing a micro-platform at the IoT device, as well as providing tools and approaches to leverage this platform, will likely expand the capabilities of IoT systems and provide more use cases for IoT in general."

PROSPECTS

It is a mistake to presume that edge computing is a phenomenon that will eventually, entirely, absorb the space of the public cloud. Indeed, it's the very fact that the edge can be visualized as a place unto itself, separate from lower-order processes, that gives rise to both its real-world use cases and its someday/somehow, imaginary ones. It was also a mistake, in perfect hindsight, to presume the disruptive economic force of cloud dynamics could completely commoditize the computing market, such that a VM from one provider is indistinguishable from a VM from another, or that the cloud will always feel like next-door regardless of where you reside on the planet.

Yet it's very difficult, when plotting the exact specifications for what any service provider's or manufacturer's edge services, facilities, or equipment should be, to get caught up in the excitement of the moment and imagine the edge as a line that spans all classes and all contingencies, from sea to shining sea. Like most technologies conceived and implemented this century, it's being delivered at the same time it's being assembled. Half of it is the principle and the other half promise.

Once you obtain a beachhead in any market, it's hard not to want to drive further inland. There's where the danger lies: where the idea of retrofitting the Internet with quality of service can make anyone lose, to coin a phrase, its edge.

According to ZDNnet.com